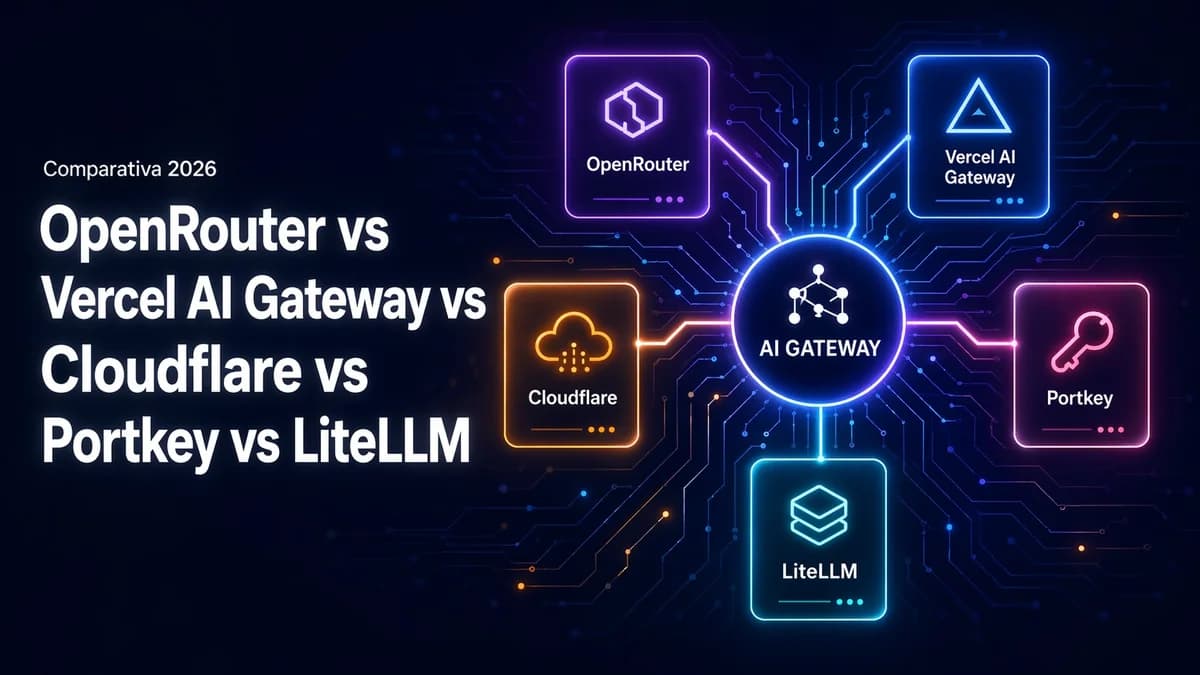

OpenRouter vs Vercel AI Gateway vs Cloudflare vs Portkey vs LiteLLM, a 2026 comparison

A cross-cutting comparison of AI Gateways in 2026 based on the things that actually matter, catalog, cost, latency, observability, failover, privacy, operations, and lock-in. When to pick each one, and why they aren't mutually exclusive.

The question comes up every time I add AI to a new project. Do I call the provider SDK directly, or put an aggregator in the middle? And if I use an aggregator, which one? Over the last few months I've used OpenRouter, Vercel AI Gateway, and direct calls to the official SDKs, and I've seriously evaluated Cloudflare AI Gateway, Portkey, and LiteLLM for other pieces. This post is the cross-cutting comparison I wish I'd had before starting, not as a table (which will be outdated tomorrow) but by the axes that actually carry weight in the decision.

There's a companion post about how I migrated three projects from OpenRouter to Vercel AI Gateway, which is a chronological walkthrough. This is the opposite, a map of the terrain as it looks today.

What an AI Gateway is, and what it isn't

An AI Gateway is a layer between your code and model providers. It exposes a unified API (almost always compatible with the OpenAI Chat Completions dialect, which has become the de facto standard) and handles talking to each provider behind the scenes. You ask for anthropic/claude-sonnet-4 or openai/gpt-5, the gateway translates the request to the corresponding provider SDK, and gives you back a normalized response.

This solves three real problems. First, it decouples your code from a specific provider, so you can change models with a string instead of touching business logic. Second, it centralizes observability, logs, token usage, latencies, and errors all go through the same place regardless of the model. Third, it gives you a single point where you can apply fallbacks, retries, rate limits, and quotas.

What a gateway doesn't solve is making good choices. If your prompt is poorly designed, if your context is a dumpster fire, or if you're not caching when you could be, no gateway is going to save you. It's an operational layer, not an intelligent one.

The 2026 players

The landscape has moved a lot in a year. These are the six that matter today, along with the mental model that fits each one.

OpenRouter. The veteran. A managed gateway with the broadest model catalog on the market, an OpenAI-compatible API, and pay-as-you-go pricing with a markup over the provider price that's usually in the low single digits. You can pay with their credits or bring your own keys for each provider (BYOK). It's the default option when you don't have a specific reason to pick something else.

Vercel AI Gateway. The newer option with the best integration. A managed gateway, a broad catalog (not as broad as OpenRouter, but it covers the important stuff), native integration with Vercel's AI SDK and the Next.js ecosystem. If you deploy on Vercel, the gateway resolves internal DNS and simplifies operations. Pricing is credit-based, and you consume credits based on tokens. BYOK is available.

Direct calls to the Anthropic, OpenAI, or Google SDK. This isn't a gateway, but it's the alternative everything else gets compared against. Lowest latency, access to proprietary features (prompt caching from Anthropic, batch API, computer use, priority tiers), and official pricing with no markup. In return, your code knows too much about the provider, and changing later means rewriting.

Cloudflare AI Gateway. A different beast. It doesn't charge a markup for forwarding requests, you pay the final provider with your own keys. It charges by event volume and for related services (cache, rate limits, feedback). It looks more like an observability layer than a model marketplace. If you already live in Cloudflare (Workers, R2, D1, Queues), it fits like a glove.

Portkey. The gateway built for teams with enterprise requirements. Very rich observability, guardrails, virtual keys to split quota across teams, SSO, auditing. You can use it as a managed service or deploy it in your own infrastructure. Pricing is based on request volume, not a markup on the provider.

LiteLLM. An open source, self-hosted proxy. All the value of a gateway (unified API, fallbacks, logging) without a commercial middleman. You operate it yourself, pay the provider directly, and keep the service running. Ideal when you don't want to depend on anyone and the operational work is worth it.

Axis 1, model catalog

The first concrete question is which models you can use without friction.

OpenRouter leads by a mile. It has everything from frontier models by Anthropic, OpenAI, and Google to open weights served by Together, Fireworks, DeepInfra, and the rest. If a model exists and it's even vaguely commercial, OpenRouter has it. During exploration and prototyping, that catalog saves a lot of time.

Vercel AI Gateway covers the important stuff (Anthropic, OpenAI, Google, Mistral, popular open models) but it isn't encyclopedic. For serious production work with established models, it's enough. For experimenting with niche models, it isn't.

Cloudflare AI Gateway is a pass-through. Any provider you can call with your own token, you can call through Cloudflare. It's not a curated catalog, it's a door to the providers you already have.

Portkey and LiteLLM are similar here. They don't have their own catalog, they're SDK aggregators. You can point them at any compatible provider, which basically means all the relevant ones in 2026.

Direct calls are the opposite extreme. One provider, its full native catalog.

Axis 2, cost

There are three different pricing models mixed together here.

Markup on top of the provider (OpenRouter, Vercel AI Gateway). You pay a bit more than the model's official price. The markup is modest, but it's there, and on high-traffic projects you'll notice it by the end of the month. In return, they centralize billing and abstract away managing multiple accounts.

No markup, you pay for event volume (Cloudflare AI Gateway). They don't charge for passing requests through if the calls use your keys. They charge for added features (cache, rate limit, analytics) and for sustained volume. For high traffic with expensive models, this works out much cheaper.

Cost based on request volume (Portkey, managed LiteLLM). Pricing is per million requests processed, regardless of the model price. If you're using expensive models, this works out well. If you're using cheap models but making lots of calls, it works out worse.

No gateway (direct calls). You pay the official price. For a project with a single provider and moderate volume, it's still the cheapest option in absolute terms, although you lose everything else.

For small projects, gateway cost is noise next to model cost. For big projects, the pricing model absolutely changes TCO. There's no universal answer, it depends on how much you're calling and what you're calling.

Axis 3, latency

A gateway adds a hop. That hop costs milliseconds.

Vercel AI Gateway, deployed in the same region as your app, usually adds a few tens of milliseconds. If you deploy on Vercel and use the gateway, resolution is practically local. It's the gateway with the smallest latency penalty I've measured.

OpenRouter lives in its own cloud, with good routing but without the advantage of the same edge. The penalty is visible but not worrying for conversational responses.

Cloudflare AI Gateway runs on Cloudflare's edge. If you're already serving from Workers or from behind Cloudflare, the extra hop is minimal. If not, it loses part of its advantage.

Portkey and managed LiteLLM have acceptable latencies, but if you deploy them yourself, latency depends on where you put them. Self-hosted LiteLLM in the same VPC as your app can be almost free in latency terms.

Direct calls are the baseline, with no extra hop. For flows where every millisecond matters (completions in the hot path, products that show streaming), that extra hop does matter, and it's worth measuring.

Axis 4, observability

This is where the differences are biggest, and quietest.

Portkey is the most complete. Per-request view, per model, per team (virtual keys), cost tracking, guardrails, latency percentiles, export to external tools. If you have a team looking at numbers every week, this is the one that needs the least built on top.

Cloudflare AI Gateway got serious in 2026. Metrics, logs, caching hit rate, rate limit analytics, all integrated with the rest of the Cloudflare stack.

OpenRouter gives you the basics, usage by key, list of requests, some charts. Vercel AI Gateway has improved with a decent panel, integrated into the Vercel dashboard.

Self-hosted LiteLLM gives you whatever you hook up. If you connect it to your own Grafana or Langfuse, it can be the most powerful of the lot. If you don't configure it, it's the one that gives you the least.

Direct calls depend on the SDK. Official SDKs have gotten much better at instrumentation, but correlating calls across three different providers with three different telemetry systems is still manual work.

Axis 5, failover and retries

A gateway without failover loses half its appeal.

Portkey shines here. You can define fallback chains, retries with backoff, and conditional routing based on error type. If a model returns 429 or 5xx, the next candidate in the chain gets the request without your code even noticing.

LiteLLM has this natively too, with a YAML configuration that covers most cases.

OpenRouter lets you define fallback models per request, which is enough for the common cases. If a model is down or rate-limited, you can say try this other one.

Vercel AI Gateway has automatic retries and added model fallback in 2026, though with less granularity than Portkey.

Cloudflare AI Gateway has retries and failover through configuration, integrated with its rules. It works well if your error logic isn't too sophisticated.

With direct calls, anything you want to orchestrate, you build yourself. Nothing is automatic.

Axis 6, privacy and data residency

The least examined axis, and the most important if you work with sensitive data.

Direct calls are the simplest. You sign the provider's agreement, you know its guarantees (zero data retention in Anthropic and OpenAI for paying customers, European residency through Google Vertex, etc.), and that's it. Each provider has its own options, and you apply them in your contract with them.

A managed gateway adds another party: the data goes through its infrastructure before reaching the provider. You have to add its data processing agreement to yours, review its policy, check whether it also has zero data retention, and know which region it runs in. This isn't a problem, but it is one more paperwork step.

Cloudflare AI Gateway is interesting here because it doesn't retain request bodies by default, only metadata, unless you explicitly enable logging. That's a different privacy model from OpenRouter or Vercel.

Self-hosted LiteLLM and direct calls give you full control. There are no third parties in the path other than the model provider. For projects with serious personal data, that's the shortest path.

Axis 7, operations and maintenance

Who gets the service back on its feet if something breaks on a Sunday?

OpenRouter, Vercel AI Gateway, Cloudflare AI Gateway, and managed Portkey are managed. They break on their on-call rotation, not yours. Whether they publish an SLA or not, you usually notice incidents when they're already fixing them.

Self-hosted LiteLLM and self-hosted Portkey are your responsibility. If your app depends on the gateway and the gateway goes down, your app goes down. It's worth it if you already run infrastructure, less so if you're a team without on-call.

Direct calls tie you only to provider availability, which in 2026 is more reliable than it was a year ago. The downside is that if that provider has an incident, you don't have any natural fallback unless you've built it yourself.

Axis 8, lock-in

Changing gateways shouldn't be a dramatic decision, but some of them close more doors than others.

OpenRouter and Vercel AI Gateway speak OpenAI Chat Completions, just like LiteLLM and Portkey. Switching between them is mostly a matter of changing baseURL and keys. The real lock-in is low.

Cloudflare AI Gateway asks you to think like a pass-through: you configure by gateway, and each one is a different endpoint. Migrating away means rewriting URLs and removing its cache.

Calling a proprietary SDK directly does introduce lock-in, especially if you use features that only exist in one provider (prompt caching from Anthropic, structured outputs from OpenAI, grounding from Google, tool use with slightly different semantics). Changing providers means rebuilding those parts.

When to choose each one

After going through all eight axes, this is the short guide I use.

If you're exploring models and prototyping, OpenRouter. The huge catalog and usage-based cost with no minimums let you try ten models in an afternoon without opening ten accounts.

If you already deploy on Vercel and use the AI SDK, Vercel AI Gateway. The integration with the SDK and your stack makes up for the rest.

If your application lives on Cloudflare, Cloudflare AI Gateway. No per-request markup, edge cache, integrated observability. It fits like a glove if you're already in that world.

If you're a team with formal requirements (auditing, SSO, guardrails, team quotas), Portkey. It's the one that saves you the most work on top.

If you prioritize zero commercial dependencies and you have operational savvy, LiteLLM self-hosted. The value of open source is real when you can maintain it.

If your product is tightly coupled to a single provider and you use its proprietary features heavily, direct calls. A gateway would cut off features you need and add latency.

They aren't mutually exclusive

The decision isn't binary. In the three projects where I'm adding AI, I use combinations, not a single layer.

For research and prototypes, OpenRouter as the primary. As soon as a project settles on one or two models, I evaluate whether it's worth moving to Vercel AI Gateway (if I deploy on Vercel) or direct calls (if I use proprietary features heavily). Once the project has high traffic, I put Cloudflare AI Gateway in front for cache and observability, with the keys pointing at the provider directly.

That layering sounds complicated, but it's the honest way to get the best out of each one. The gateway for catalog, the edge for cache, the direct provider for proprietary features.

What doesn't change even if the players do

If the landscape has changed this much in a year, it'll change again. These four questions are what I use to avoid getting stuck with yesterday's decision.

How much does it cost to change? If migrating from one gateway to another is a day's work, the decision is cheap and you can afford to be wrong. If it's a sprint, think twice.

What part of the value comes from the gateway, and what part comes from the provider? If 90% of your value comes from one specific model with proprietary features, a gateway takes away more than it gives. If 90% comes from talking to multiple models and comparing them, a gateway is half the battle.

What data goes through it? If it's internal logs, whatever. If it's user messages, documents, personal data, the privacy axis stops being secondary.

How much operational pain can you take? Self-hosted is tempting until the first incident at three in the morning. If you don't have on-call, it isn't for you.

My choice today

If you forced me to keep just one layer for a new 2026 project, I'd pick OpenRouter. The catalog, the predictable cost, and the time it saves while experimenting are hard to beat when you're getting started. Once the project stabilizes, I reevaluate.

If the project deploys on Vercel, I move to Vercel AI Gateway. If it lives on Cloudflare, I move to Cloudflare AI Gateway. If it uses Anthropic prompt caching seriously, I move to direct calls. None of those migrations are painful if you started out speaking Chat Completions.

The main thing I've taken away from using several of them is that a gateway isn't a religion. It's an operational decision with a short shelf life, one you should revisit every few months, and one that should be easy to rewind. If picking one closes doors, you probably picked the wrong one.

Jose, author of the blog

QA Engineer. I write out loud about automation, AI and software architecture. If something here helped you, write to me and tell me about it.

Leave the first comment

What did you think? What would you add? Every comment sharpens the next post.

If you liked this

Tu Dockerfile descarga binarios de atacantes (y cómo evitarlo)

Tres medidas concretas para proteger tu Dockerfile contra ataques de supply chain: verificación de checksums con SHA256, control de scripts npm con ignore-scripts y eliminación del package manager en la imagen de producción.

Infisical en Dokploy: cómo gestionar secretos sin meterlos en variables de entorno

Las variables de entorno en texto plano son cómodas hasta que dejan de ser seguras. Explicamos cómo desplegamos Infisical como gestor de secretos self-hosted dentro de Dokploy y cómo conectamos nuestras aplicaciones para que lean las credenciales de forma cifrada y auditable.

Tests E2E que se reparan solos: cómo construimos un pipeline de self-healing con IA

Los tests E2E se rompen con cada cambio de interfaz. En JMO Labs construimos un pipeline de 5 fases con IA que planifica, ejecuta, repara selectores, diagnostica fallos y verifica resultados de forma autónoma. La caché de selectores hace que cada ejecución sea más rápida que la anterior.